by Ares Cabó Carrera (ERNI Spain)

The integration of artificial intelligence (AI) into the health sector is revolutionising healthcare delivery and innovation. As AI systems become more prevalent and gain traction, they are transforming how we handle the data, from a binary outcome (presence or absence of) to predictions. With evolving AI that increases its power as days pass, we can hardly imagine what is to come and what can be achieved through AI in the upcoming years.

For that, we will need some guidance and to not forget ethics: that’s where product owners come into play. Product owners must ensure that these technologies are developed and integrated ethically and responsibly. Key ethical challenges such as data quality, bias, and diversity in data representation must be addressed to build trust in AI systems and maximise their potential benefits in healthcare.

When thinking about the ethical implications in AI, the three main topics are data quality, diversity in data representation and bias in AI.

Data quality

High-quality data is essential for training accurate and reliable AI models. In order to achieve this, it is not enough to rely on expert reviews to ensure data quality (as critical as they may be), since even in that scenario, errors can persist and lead to poor decision-making. Garbage in, garbage out summarises the consequences of poor data quality: it is not possible to rely on the AI’s results if the language models have not been previously fed properly. One prioritisation the product owners can do is related to data cleaning: there are some tools currently available with data-centric AI approaches that assist in data cleaning, addressing issues such as off-topic samples, near duplicates and label errors, among others. This concept of self-clean involves using self-supervised learning to detect and rectify data issues, either through human-in-the-loop processes or fully automated systems, significantly enhancing efficiency.

Diversity in data representation

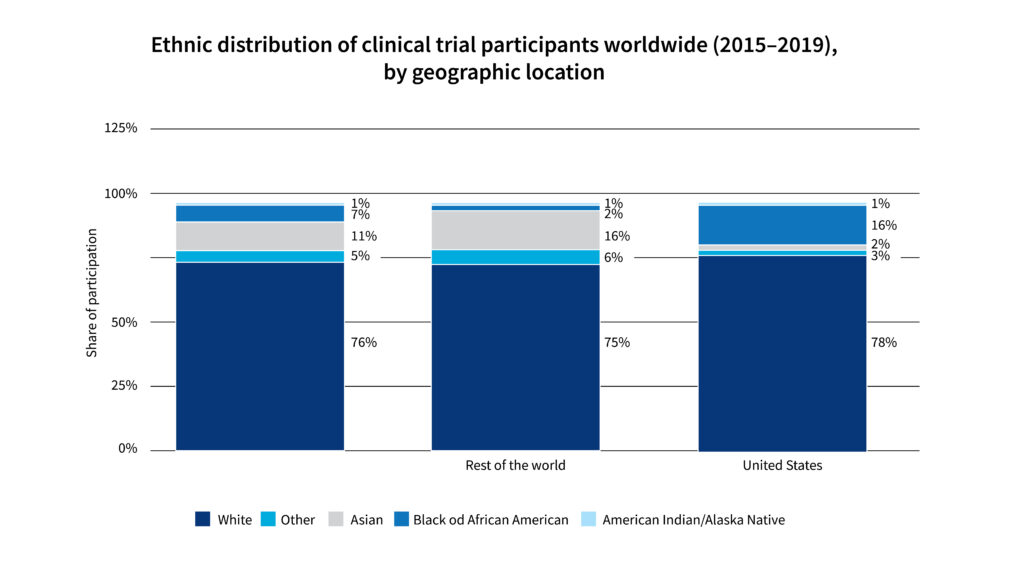

Diverse data sets are crucial to ensure AI systems are inclusive and representative of all populations, and this lack of diversity in data representation already entails a challenge on its own. As an example, between 2015 and 2019, the ethnicity of more than 70% of clinical trial participants globally was white. Homogeneous data sets can result in AI systems that fail to account for the needs of underrepresented groups, leading to biased outcomes. Product owners must prioritise diversity in data collection to ensure AI systems are equitable and effective across different demographics. This involves widening the range of end users to consider, as well as that of stakeholders.

Image: Statista

Bias in AI

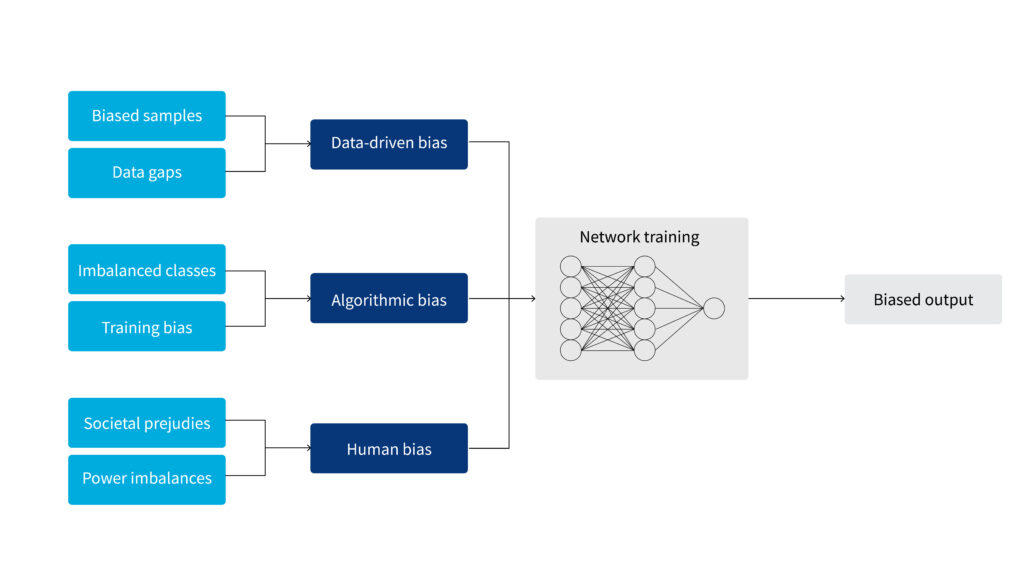

With this lack of diversity in data representation, and poor data quality, AI systems can unintentionally perpetuate biases present in training data, affecting outcomes across gender, ethnicity, age and other factors. These biases can lead to unfair treatment and disparities in healthcare delivery, even if the data has been considered of good quality. As part of their product’s value maximisation, product owners must recognise the impact of biased AI systems, help both the team and the stakeholders understand these biases and work towards mitigating them to ensure equitable healthcare solutions.

Patterns (N Y). 2021 Oct 8;2(10):100347. doi: 10.1016/j.patter.2021.100347

Product owners’ contribution

Some of the areas where we as product owners can contribute to ethical AI development are leadership and advocacy, stakeholder engagement, and education and awareness.

Leadership and advocacy

At ERNI, we product owners champion ethical AI practices by prioritising them in project goals and objectives. Having a background in HealthTech makes us aware not only of the regulations but also of the market trends and needs. By advocating for ethical guidelines and frameworks, we can steer AI development towards responsible and fair practices with support from Quality and Regulatory teams. As an example of an ethical guideline that we put into practice as product owners, we make sure that anywhere that the AI has been involved, we transparently tag it as such.

Stakeholder engagement

Involving diverse stakeholders ensures that varied perspectives are considered, leading to more equitable AI solutions. As product owners at ERNI, we seek to engage with stakeholders from different backgrounds to ensure that AI systems are developed with inclusiveness in mind. More than ever, it is crucial that we have as many different perspectives as possible.

Education and awareness

Educating teams about ethical AI practices and potential biases is crucial for fostering a culture of responsibility. Whether it is in recurrent lunch-and learn meetings with short case studies, one-time workshops with hands-on sessions, specific ramp-ups for new hirings or with KPIs to track the education’s effectiveness, product owners should implement continuous learning and awareness-building initiatives to ensure teams are equipped to address ethical challenges in AI development. Additionally, we promote using our internal AI tool, which is GDPR compliant and does not store any information whatsoever, making sure that all the team members understand the criticality of data privacy.

Ensuring data quality through AI-driven approaches

We should move towards AI-driven data cleaning not only to promote ethical AI but also to improve efficiency. Similar to our experience with the benefits of automated testing, AI-driven data cleaning can reduce effort by a factor of 5 to 50. Ensuring data quality through regular audits, validation processes and the use of AI-driven data cleaning tools is essential. Trust in AI solutions depends on the quality of data being processed, as misclassified data can diminish user confidence and worsen performance and accuracy. While AI can be a useful tool, we always perform a deep review of its outputs and validate whether the results can be trusted.

Embedding diversity by design

We can also embed diversity into the design process from the outset, ensuring it is a fundamental aspect rather than an afterthought. As product owners, we try to incorporate diverse perspectives and data sets into the development process to create inclusive AI systems.

Identifying and mitigating bias

Lastly, in order to identify and reduce biases in AI systems, we can implement strategies such as diverse data collection, bias audits and regular reviews to identify and reduce biases in AI systems. Whenever possible, we prioritise these strategies to ensure AI systems are fair and equitable.

Conclusion

Ethical AI development is essential for building trust and ensuring the effectiveness of AI systems in the HealthTech sector. As product owners, we are encouraged to take proactive steps in promoting ethical practices and diversity in data representation. By addressing these challenges, we can ensure that AI systems deliver equitable healthcare solutions.

Don’t forget to share any experience or strategy for ethical AI development you may have with other product owner colleagues and stakeholders in the HealthTech sector to advance ethical AI practices!

If you are interested in more data-related topics, read also our eBook about data-driven projects.